Reputation & Trust in an AI-First World: Why Reviews and Safety Signals Matter More Than Ever

When AI Takes Responsibility for Your Recommendation

When an AI system recommends a healthcare provider, it is implicitly staking its own credibility on that choice.

That risk is far higher in healthcare than in most industries. A bad restaurant recommendation is an inconvenience; a bad healthcare recommendation can cause real harm. As a result, AI-driven search experiences are unusually sensitive to reputation and trust signals—not just what organizations claim about themselves, but what patients, partners, regulators and the wider web corroborate.

For hospitals, health systems and multi-location practices, this means reviews, ratings, sentiment and broader E-E-A-T signals (Experience, Expertise, Authoritativeness, Trustworthiness) now play a central role in whether AI systems are comfortable naming you as an answer at all.

For years, reputation management in healthcare has been viewed as a tactical discipline—something to monitor, respond to and improve incrementally. In AI-powered search environments, that perspective no longer aligns with how reputation works.

Today, reputation isn’t just persuasive—it’s decisive.

As AI systems summarize healthcare information and decide which organizations to surface, reputation acts as a trust filter, determining eligibility for visibility before content is considered.

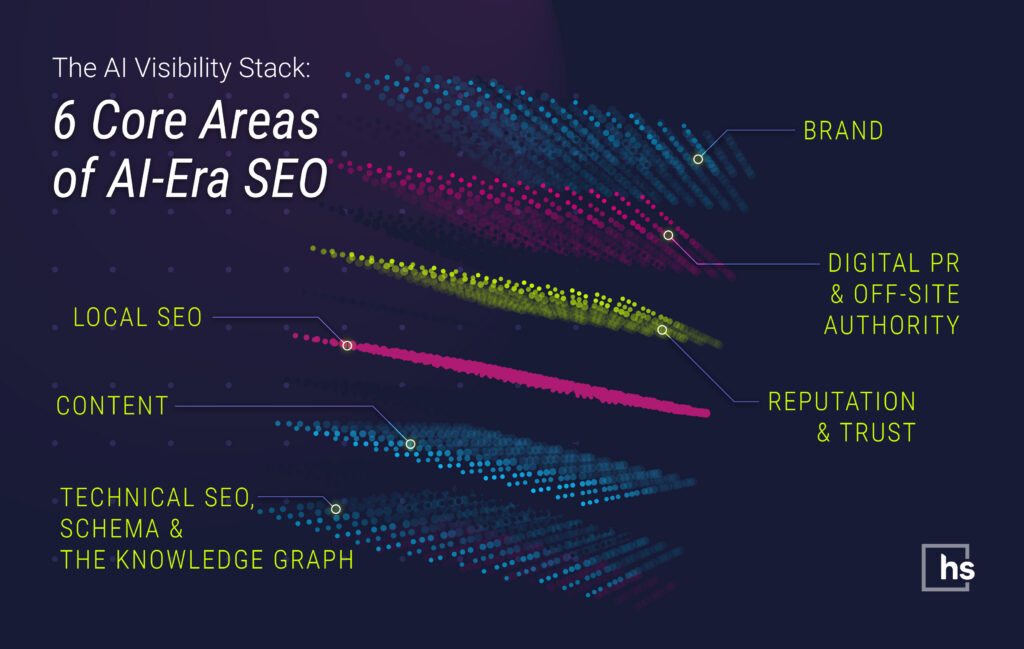

This article is the fifth in-depth look in a seven‑part series on How to Show Up in AI Overviews, ChatGPT, Claude, Gemini and Perplexity for healthcare brands. Our overview introduces the AI Visibility Stack™—six core areas of AI‑era SEO—then links to six deep‑dive playbooks. Together, they’re designed so your marketing, digital and clinical leaders can work from the same framework rather than chase disconnected SEO tips.

1. How AI Interprets Healthcare Reputation

AI systems ingest enormous volumes of public feedback and third-party data—reviews, ratings, press coverage, surveys and social content—and synthesize those inputs to assess risk.

In healthcare, that assessment tends to show up in three distinct ways.

First, risk filtering. Providers with patterns of unresolved complaints, serious reputational issues or consistently poor reviews are less likely to be labeled “best,” “top rated” or even surfaced prominently. AI systems are designed to avoid risky recommendations whenever possible.

Second, preference shaping. When multiple providers meet baseline relevance and proximity requirements, those with stronger, more recent and more detailed positive feedback tend to rise. Reviews don’t just validate quality; they differentiate otherwise similar options.

Third, context building. Review text helps AI understand what you’re known for. Are patients regularly mentioning compassionate bedside manner? Short wait times? Clear explanations? That language frequently reappears—implicitly or explicitly—in AI-generated summaries.

In healthcare, exclusion from AI-powered visibility is often silent. Organizations don’t necessarily rank lower—they simply stop appearing in AI-generated answers altogether.

When confidence is low, AI systems err on the side of caution. They omit providers rather than risk recommending an organization whose reputation signals are inconsistent, incomplete or uncertain. For healthcare brands, that silence is often the first sign that trust thresholds are no longer being met. And when a provider is invisible to AI, a competitor with better trust signals is being recommended by default.

In short, reputation isn’t just read by patients; it’s evaluated and reused by algorithms before patients see their options.

2. Reviews as the New Front Door for Trust

Reviews have long influenced healthcare choice, but their role has expanded as AI mediates discovery.

AI-powered systems and local search platforms usually weigh several review-related signals together:

- Volume: Enough reviews to be statistically meaningful for each location or provider

- Recency: A steady flow of new feedback instead of a stale historical snapshot

- Rating and sentiment: Both the star average and the themes expressed in review text

- Responsiveness: Whether the organization replies professionally, especially to criticism

A common mistake is assuming that AI systems interpret reviews the way humans do. AI does not focus on personal stories or single incidents. Instead, it analyzes large review datasets to identify recurring patterns and trends.

AI systems identify consistent patterns in feedback over time, across multiple platforms and locations. Stable trends suggest operational reliability, while erratic changes or sudden surges introduce risk. In healthcare, this perceived uncertainty often leads AI to treat the provider as higher risk.

What’s changed isn’t just that reviews matter, but how they’re used. Rich, current, well-managed reviews make providers appear safer, more transparent and more patient-centric—qualities AI favors when making recommendations.

Research summarized by Moz shows that review signals increasingly influence visibility in local and AI-assisted search, especially in YMYL categories in which trust thresholds are higher.

3. Beyond Reviews: Broader Trust and Safety Signals

In an AI-first environment, reputation extends well beyond star ratings.

Search quality systems and generative models also look for corroborating trust signals that reinforce what reviews suggest. These often include:

- Clinical credibility: Accurate, up-to-date content; clear physician credentials; affiliations; and evidence of guideline-based care

- Transparency: Complete contact information, privacy policies, service descriptions and honest representation of capabilities and limitations

- Third-party validation: Mentions in reputable medical publications, awards, conference participation and involvement in recognized initiatives

These items help AI systems answer a core question: Is this organization accountable and reliable enough to recommend?

This fits closely with Google’s long-standing emphasis on E-E-A-T for healthcare content, as summarized in Moz's analysis of search quality guidance.

4. Designing a Modern Healthcare Reputation Program

In 2026, reputation management is no longer about chasing five-star ratings in isolation. It’s about building a continuous trust system that captures feedback, responds appropriately and feeds insight back into operations.

Effective programs typically include:

- Automated, compliant review requests, integrated with patient experience workflows and timed appropriately across locations

- Centralized monitoring, covering Google, healthcare-specific platforms and social channels, with alerts for emerging issues

- Response playbooks, defining how to reply to different feedback types in a HIPAA-safe, brand-consistent way

- Sentiment analysis and reporting, surfacing repeated problems like access barriers, communication lapses or billing friction

Done well, reputation management operates as both a trust engine and an early warning system—highlighting problems before they intensify into visibility or patient-experience risks.

5. Where AI Tools Fit—and Where They Don’t

AI tools are increasingly embedded in reputation platforms, but the same principle applies here as in SEO: AI should assist, not replace, human judgment.

High-value uses include:

- Sentiment and theme analysis across large volumes of feedback

- Drafting response suggestions for staff to review and personalize

- Flagging reviews that refer to safety, discrimination or serious dissatisfaction

Risky uses include:

- Fully automated public replies without human oversight

- Generating or incentivizing fake reviews

- Using patient-identifiable data outside HIPAA-compliant environments

AI can reduce friction in a complex, multi-platform job—but tone, accountability and escalation must remain human-owned.

6. Connecting Reputation Back to AI Search Visibility

Reputation should not live in a silo. In an AI-first world, it directly influences search visibility and the likelihood of recommendations.

High-performing organizations intentionally connect reputation data to:

- Local SEO strategy, prioritizing underperforming locations or specialties before pushing “best near me” visibility

- Content and E-E-A-T, reinforcing common positive themes from reviews in service pages, bios and FAQs

- AI monitoring, checking how AI Overviews and chat tools summarize the brand, and whether those summaries reflect the current reality

Over time, integrating patient experience, reputation management and SEO helps brands remain resilient as AI reshapes healthcare discovery.

As Gartner research shows, a majority of consumers distrust AI-powered search summaries and want more control over them—underscoring how trust, credibility and risk mitigation are central to whether AI search and recommendations feel safe and useful to users.

Trust within AI-driven healthcare discovery builds over time. AI systems depend heavily on historical signals, placing greater weight on consistency and corroboration than on short-term optimization.

Organizations that steadily strengthen credibility—across reviews, content, third-party validation and process transparency—gain durable advantages in AI-mediated visibility. Those that allow trust signals to fragment may find confidence difficult to rebuild once it’s lost.

How Healthcare Success Implements Reputation and Trust at Scale

When we help healthcare organizations strengthen their reputation in an AI-first search environment, we treat reviews and trust signals not as a reactive function, but as a core input into visibility, recommendation and growth.

Our approach is crafted to reduce uncertainty for AI systems by ensuring patient experience signals, brand messaging and clinical credibility consistently reinforce one another.

In practice, that means:

- Reputation as a system, not a campaign

Healthcare Success helps organizations design repeatable, compliant workflows for capturing patient feedback across locations and service lines—so reputation represents ongoing reality, not one-off pushes for reviews. - Centralized control with local execution

Multi-location organizations need governance without losing authenticity. We create centralized standards for review requests, responses and escalations while enabling local teams to appropriately engage with patients. - AI-aware reaction plans

We craft response playbooks that integrate empathy, compliance and clarity—recognizing that responses are read not just by patients but by AI systems evaluating accountability and professionalism. - Sentiment tied to operational insight

Review data is analyzed for recurring themes—access delays, communication lapses, billing friction—and routed back to marketing, operations and leadership as actionable intelligence, not vanity metrics. - Integration with SEO and content strategy

Healthcare Success aligns reputation insights coupled with local SEO, service-line content and

E-E-A-T signals. Positive patient language reinforces on-site messaging; recurring concerns inform where trust needs to be rebuilt before pushing visibility. - Monitoring how AI summarizes the brand

Reputation management now includes observing AI Overviews and conversational tools to see how organizations are described. When summaries drift from reality, we trace the source signals and correct them upstream. - Ongoing governance and risk prevention

Reputation erodes quietly when left unmanaged—especially after acquisitions, rebrands or service expansions. We help organizations implement recurring audits to keep trust signals current and credible over time.

The goal isn’t simply to look good online. It’s to create a durable trust footprint—one that patients believe and AI systems feel safe amplifying when recommending care.

This blog post is the fifth deep-dive in a seven-part series. To keep building a unified AI Visibility Stack, we encourage you to continue reading the rest of the series:

- Brand in an AI-First Search World

- Content That AI Loves to Cite

- Technical SEO & Schema for AI

- Local SEO in the Age of AI

- Off‑Site Digital PR in an AI World

Mini-FAQ: Reputation & Trust in an AI-First World

Q: How many reviews do we really need per location or provider?

There’s no fixed threshold, but competitive markets commonly favor locations with dozens—or hundreds—of recent reviews. The goal is steady, compliant growth, so a few outliers don’t define perception.

Q: Do AI systems read review text or just star ratings?

Both. AI systems examine review language to identify themes like access, communication and care quality, then reuse that context in summaries and comparisons.

Q: How should we respond to negative reviews?

Promptly and professionally, without sharing PHI. Acknowledge the concern, invite offline resolution and avoid defensiveness. Thoughtful responses signal accountability to both patients and LLM models.

Q: Does reputation management really affect AI search visibility?

Yes. Reviews and broader trust signals feed the same systems that power AI Overviews and local recommendations. Organizations that proactively manage their reputations tend to surface more often—and more favorably—when patients ask AI tools who they should trust.

Subscribe for More:

Don’t miss future insights—subscribe to our blog and join us on LinkedIn: Stewart Gandolf and Healthcare Success.